In my last blog, I wrote about big data recommendation engines. After receiving feedback and questions, I present you with this blog with purpose of introducing basics of machine learning and modeling. I hope you will find it useful.

Let us start with the roots of Machine Learning (ML).

We know that hardware speed and capability increases at a faster rate to software. The gap is increasing daily. Since the 1950s, computer scientists have tried to give computers the ability to learn with increasing hardware speed. Artificial intelligence (AI) is the human-like intelligence exhibited by machines or software. It is also an academic field of study. Major AI researchers and textbooks define the field as "the study and design of intelligent agents", where an intelligent agent is a system that perceives its environment and takes actions that maximize its chances of success. MIT's John McCarthy, who coined the term in 1955, defines it as "the science and engineering of making intelligent machines".

AI research is highly technical and specialized, and is deeply divided into subfields that often fail to communicate with each other. Some of the division is due to social and cultural factors: subfields have grown up around particular institutions and the work of individual researchers. AI research is also divided by several technical issues. Some subfields focus on the solution of specific problems. Others focus on one of several possible approaches or on the use of a particular tool or towards the accomplishment of particular applications.

The central problems (or goals) of AI research include reasoning, knowledge, planning, learning, natural language processing (communication), perception and the ability to move and manipulate objects. General intelligence is still among the field's long term goals. It attempts to emulate human thinking. Currently popular approaches include statistical methods, computational intelligence and traditional symbolic AI. There are a large number of tools used in AI, including versions of search and mathematical optimization, logic, methods based on probability and economics, and many others. The AI field is interdisciplinary, in which a number of sciences and professions converge, including computer science, psychology, linguistics, philosophy and neuroscience, as well as other specialized field such as artificial psychology.

Birth of Machine Learning

ML is a subfield of AI concerned with computer programs that learn from experience. ML is building computer programs that improve its performance (its learning) of doing some task using observed data or past experience. An ML program (learner) tries to learn from the observed data (examples) and generates a model that could respond (predict) to future data or describe the data seen. In 1959, Arthur Samuel defined ML as a "Field of study that gives computers the ability to learn without being explicitly programmed".

Tom M. Mitchell provided a widely quoted, more formal definition: "A computer program is said to learn from experience E with respect to some class of tasks T and performance measure P, if its performance at tasks in T, as measured by P, improves with experience E". This definition is notable for its defining machine learning in fundamentally operational rather than cognitive terms, thus following Alan Turing's proposal in Turing's paper "Computing Machinery and Intelligence" that the question "Can machines think?" be replaced with the question "Can machines do what we (as thinking entities) can do?"

The field was founded on the claim that a central property of humans, intelligence—the sapience of Homo sapiens—"can be so precisely described that a machine can be made to simulate it". This raises philosophical and social issues about the nature of the mind and the ethics of creating artificial beings endowed with human-like intelligence, issues which have been addressed by myth, fiction and philosophy since antiquity. Artificial intelligence has been the subject of tremendous optimism but has also suffered stunning setbacks. But, today it has become an essential part of the technology industry, providing the heavy lifting for many of the most challenging problems in computer science.

Data Mining and Machine Learning

For years now, we are familiar with data mining in the context of business intelligence. Is data mining machine learning? Generally, data mining (sometimes called data or knowledge discovery) is the process of analyzing data from different perspectives and summarizing it into useful information - information that can be used to increase revenue, cuts costs, or both. Data mining software is one of a number of analytical tools for analyzing data. It allows users to analyze data from many different dimensions or angles, categorize it, and summarize the relationships identified. Technically, data mining is the process of finding correlations or patterns among dozens of fields in large relational databases.

These two terms are commonly confused, as they often employ the same methods and overlap significantly. They can be roughly defined as follows:

Machine learning generally focuses on prediction, based on known properties learned from the training data.

Data mining focuses on the discovery of (previously) unknown properties in the data. This is the analysis step of Knowledge Discovery in Databases (KDD)

The two areas overlap in many ways: data mining uses many machine learning methods, but often with a slightly different goal in mind. On the other hand, machine learning also employs data mining methods as "unsupervised learning" or as a preprocessing step to improve learner accuracy. Please read further in the blog to learn how it is applied.

ML and NON-ML Algorithms

Few days back in a class setting, I was asked what was difference between ML and NON-ML algorithms as we find in computer science. Here is my view.

ML algorithms are kind of non-deterministic algorithms. These algorithms constantly evolve with a goal to optimize a set of model parameters for meeting objective functions i.e detect fraud accurately, predict mortality of patient etc with the help of machines. These algorithms usually run in distributed computing environment and adopt a platform model. Non-ML algorithms are mostly deterministic. They do not require distributed computing in general. Their goals are focused on a particular objective. Let me give two examples to explain the differences.

Classic NON-ML Heapsort Algorithm has best case performance of O(n) while average case performance of O(nlogn). Heapsort is a comparison-based sorting algorithm. Heapsort is part of the selection sort family; it improves on the basic selection sort by using a logarithmic-time priority queue rather than a linear-time search. These algorithms express gradual improvement upon a base technique. Goal is to reduce complexity and improve performance for a particular task such as sorting.

Classic ML Logistic Regression always tries to predict binary output of set of input data. Logistic regression is a statistical method for analyzing a dataset in which there are one or more independent variables that determine an outcome. The outcome is measured with a dichotomous variable (in which there are only two possible outcomes). The goal of logistic regression is to find the best fitting model to describe the relationship between the dichotomous characteristic of interest (dependent variable = response or outcome variable) and a set of independent (predictor or explanatory) variables.

ML Modeling and Big Data

A model is then, a structure that represents or summarizes some data. Its summarization process is based on an algorithm.

Here is an example. ML program gets a set of patient cases with their diagnoses. The program will either:

Predict a disease present in future patients, or

Describe the relationship between diseases and symptoms

So, ML is like searching a very large space of hypotheses to find the one that best fits the observed data, and that can generalize well with observations outside the training set.

Goal is to tell the computer what task we want it to perform and make it to learn to perform that task efficiently. ML imparts emphasis on learning, different than expert systems: emphasis is on expert knowledge which is basis of AI. Expert systems don't learn from experiences. They encode expert knowledge about how they make particular kinds of decisions.

ML is an interdisciplinary field using principles and concepts from statistics, computer science, applied mathematics, cognitive science, engineering, economics and neuroscience. ML included algorithms and techniques are found in Data Mining, Pattern Recognition, Neural Networks and other sophisticated research areas.

ML compelling cases are many. Here are few of them.

- When expertise does not exist (navigating on Mars)

- Solution cannot be expressed but a deterministic equation (face recognition)

- Solution changes in time (routing on a computer network)

- Solution needs to be adapted to particular cases (user biometrics)

Now, let us discuss broadly ML algorithms and see how big data technology has made them easier to apply.

The algorithms come in several categories.

Supervised learning - It is used when the observed data includes the correct or expected output.

Example: Fraud detection

Detection if output is binary (Y/N, 0/1, True/False).

Classification - If output is one of several classes (e.g., output is either low, medium, or high).

Example: Credit Scoring

Two classes of customers asking for a loan: low-risk and high-risk.

Input features are their income and savings.

Classifier using discriminant: IF income > θ1 AND savings > θ2 THEN low-risk ELSE high-risk

So, finding the the right values for θ1 and θ2 is part of learning.

Other classifiers use a density estimation functions (instead of finding a discriminant) where each class is represented by a probability density function (e.g., Gaussian). There are several classification applications in use today. Those include face recognition, character recognition, speech recognition, medical diagnosis etc.

Example: Determining the price of a car

x: car attributes, y: price (y = wx+w0)

The model with right values for w parameters and regression model (e.g., linear, quadratic) is fundamental to learning.

Unsupervised learning - When the correct output is not given with the observed data, this method is used.

ML tries to learn relations or patterns in the data components (also called as attributes or features)

ML program can group the observed data into classes and assign the observations to these classes.

Example: Finding the right number of classes and their centers or discriminant is learning.

Clustering is used in customer segmentation in CRM, in learning motifs (sequence of aminoacids that occur in proteins),

polling populations, student segmentation etc.

Reinforcement learning - When the correct output is a sequence of actions, and not a single action or output.

The model produces actions and rewards (or punishments). The goal is to find a set of actions that maximizes rewards (and minimizes punishments).

Example: Game playing where a single move by itself is not important. ML evaluates a sequence of moves and determine whether how good is the game playing policy.

Concept learning - In this method, machine learner predicts the value of some concept (e.g., playing some sport) given values of some attributes (e.g., temperature, humidity, wind speed, sky outlook) for some past observations or examples.

Example: Predict PLAY as Yes or No

From values of a past: outlook=sunny, temperature=hot, humidity=high, windy=false

Other types of concept learning are instance-based learning, explanation-based learning, bayesian learning, case-based learning, statistical learning etc.

Generalization - In this method, machine learner uses a collection of observations (called training set) for learning

Good generalization requires the reduction of error during the evaluation of a learner using a testing set. Here, we

avoid model over fitting that happens when the training error is low and the generalization error is high.

Example: Find a polynomial of order n-1

It fits exact n points with zero training error.

It does mean that the model will perform well with unseen data.

Now, you know how ML modeling can be used in solving practical problems we face day-to-day. You also know that it all depends on data on hand and our objectives. To our advantage, we have many ways to apply ML for our benefit. If we handle larger data sets, we can solve bigger problems.

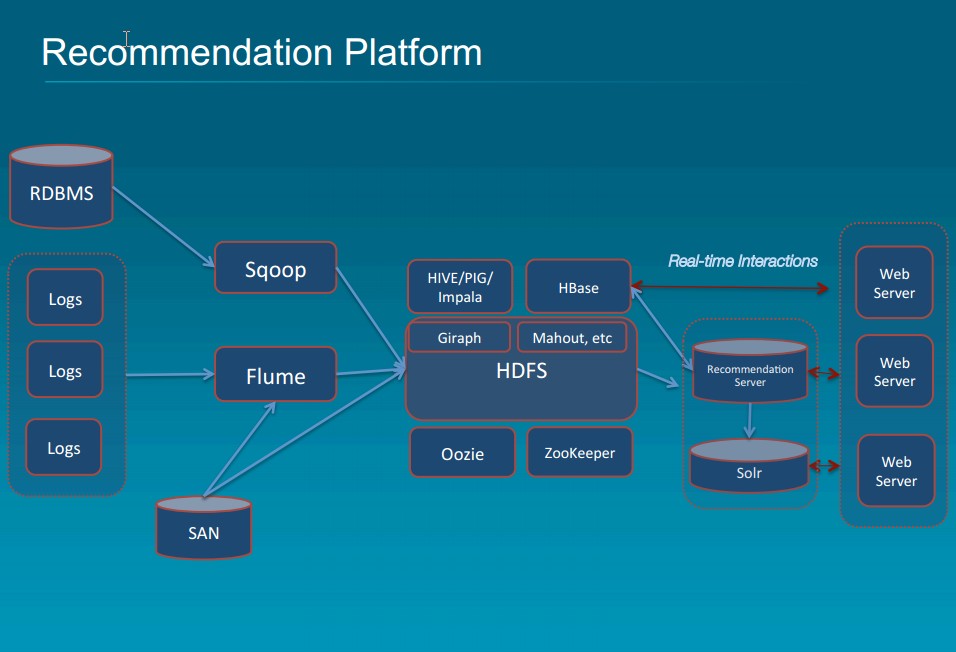

With the ability to process large sets of data with variety and velocity, Big Data technology (open source Apache Hadoop, Solr, Cassandra, Storm, Kafka, MongoDB, R) empowered by swift cloud deployment has definitely helped ML modeling being further usable. Let us discuss now some ML application areas, and then conclude with a goal we all should strive for.

Conclusion

Recent rise of big data solution deployments has accelerated the application of ML. Now, we find it being used successfully in the areas of:

- Medicine diagnosis

- Market basket analysis

- Image/text retrieval

- Automatic speech recognition

- Object, face, or hand writing recognition

- Financial prediction

- Bioinformatics (e.g., protein structure prediction)

- Robotics

I will be speaking at Silicon Valley Code Camp 2014. If you are in San Francisco Bay Area, please attend the session. It is FREE. Please find the session details at Developing Real Time Recommendation Engine

RSS Feed

RSS Feed