The popular log analysis system Splunk is expensive for many use cases. What is an alternative which is open source and integrates well with Hadoop ecosystem ? Answer is Logstash, a Java-based system is built on top of Elasticsearch, an open source search engine technology has been put to use by everyone from Netflix to Github. With Logstash, any data that carries a timestamp of some kind can be considered log data and can be ingested and processed according to user-defined rules. If you want to know how it is being used by Dreamhost, please find the presentation here .

By itself, Logstash is no direct competition for Splunk, but it's part of a stack of components that compete as a whole. The so-called ELK -- Elasticsearch (search), Logstash (ingestion and processing), and Kibana (reporting and visualization) -- stack can replace Splunk considering it's an Apache-licensed open source endeavor and has strong community involvement. It also has a lower barrier to entry than Splunk as far as cost is concerned, as the entire stack can be used for free, but for-pay support plans are available.

Elasticsearch's list of features for the 1.4 version of Logstash include a faster installation process and startup for the software, plus a revised and simplified plug-in system that lets users write their own input, filtering, and output drivers. Most significant is a redesigned set of modules for Puppet, allowing Logstash deployments to be automated through Puppet on a physical server or a VM. (Docker support for Logstash also exists courtesy of Arcus.io.) Dreamhost presentation mentions the usage of Puppet.

I tried to use Logstach myself and now tell you that I was amazed at the documentation and clarity. While I did not attempt a production type deployment, but I was able to capture easiness and extensibility of the stack. Let us discuss what I found,

Steps

As stated earlier, if you want to do your SPLUNK, take a look at open sourced LogStash from Elastic Search. I ran thru the tutorials and it was a breeze, It was highly educational. Please find detailed documentation here .For many use cases, it may just do the job. For a complete exercise, please refer to Michael's tutorial in reference,

For this blog, I followed tutorial documentation on Logstash website. My sample output is as follows:

1) Run Logstash with ElasticSearch as output

[ssabat@localhost logstash-1.4.2]$ bin/logstash -e 'input { stdin { } } output { elasticsearch { host => localhost } }'

[2014-11-15 21:56:17,844][INFO ][cluster.service ] [Cornelius van Lunt] added {[logstash-localhost.localdomain-2958-2010][KN4c5TODTmG2kQM3Io4lKw][localhost.localdomain][inet[/10.0.2.15:9301]]{client=true, data=false},},

.......

...

2) Query ElasticSearch

[ssabat@localhost ~]$ curl 'http://localhost:9200/_search?pretty'

{

"took" : 74,

"timed_out" : false,

"_shards" : {

"total" : 5

.....

....

3) Bonus : If you use kopf plugin, you will experience GUI of Splunk cluster you are used to.

Type http://localhost:9200/_plugin/kopf/#!/cluster and you wlll see beautiful cluster view of ElasticSearch cluster.

Big Data Event Logging and Analytics

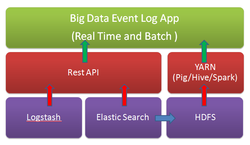

In some cases, you may want to perform batch analytics on data stored on ElasticSearch cluster, you can achieve that as well. There are plugins available. You can find more on the integration here . Elasticsearch’s engine integrates with Hortonworks Data Platform 2.0 and YARN to provide real-time search and batch analytics for your log data. Hortonworks has published article on the integration story here . So, when it comes to log search and analytics in big data Hadoop ecosystem, we have many choices. We do not have to pay fortune for it. Above diagram illustrates the concept and information flow for your Big Data Event Log App.

Business Model

Elasticsearch also has been commercializing Logstash by monetizing analytics, a tactic that hearkens back to the methods used by AppDynamics, New Relic and Famo.us: In Elasticsearch's case, it's through its Marvel product, which manages and reports back on Elasticsearch nodes. Developers can use Marvel for free, but production use is $500 per year for the first five nodes.

Conclusion

As stated in this blog, ELK ( Elastic Search, Logstash and Kibana ) framework is powerful for event log analysis and visualization. Combined with Hadoop integration, you have an open source alternative to Splunk. I did not elaborate a production deployment using fluent agents to collect the logs, hundreds of GROK filters or multi-node administration challenges ; my attempt has been to introduce you the alternative available and enable the start of your journey in Event Log management projects. With IoT ( Internet Of Things ) looming over us for years to come, it is important that we understand log management well and make best use of it to increase IoT driven business productivity, performance and profit at scale, and most importantly at affordable price! You are backed up by strong community of open source community of developers and well funded venture capital money.

Reference

RSS Feed

RSS Feed